Context Entropy: Why Your AI Memory Is Leaking

Have you ever experienced this?

It's deep in the night, and you're rushing to meet a deadline for a TUI project. You distinctly remember that about two weeks ago, late at night, you asked an AI to help you write a piece of code for TUI layout. That code used a library whose name you can't quite recall—something like textual maybe—and the logic was elegant, even handling some extreme edge cases for terminal compatibility.

At that time, you sighed, "This AI is godlike."

Now, two weeks later. A new requirement comes in, and you need to reuse that logic.

Confidently, you open your terminal and type claude -r. Or you switch to Cursor, your finger scrolling madly on the trackpad, trying to find that moment in the hundreds of history records in the sidebar.

But what greets you is a list 50 items long. The titles are all casual "Fix bug", "Update code", "Refactor", or simply empty.

Your heart starts racing.

You don't give up. You summon grep. You search frantically for the keyword textual in your ~/.claude/ directory. Green characters flash across the screen—you found it! But when you look closer, it's a blob of compressed, escaped JSON string. You see "content": "I think we should use...", but you can't tell if this sentence was said before or after a file modification. You can't see what the diff was at that time.

You try to reconstruct that conversation scene in your brain from this blob of JSON, but it's not just difficult; it's practically punishment.

15 minutes later, your coffee is cold. You stare at the scrolling screen and sigh.

You give up. You start a new conversation and let the AI write it again from scratch. And this time, the solution it gives seems to lack that "spark" from the version two weeks ago.

In that moment, you lost not just code, but a mental asset.

Your "Context Entropy" has exploded.

Why Is Your Memory "Leaking"?

Thermodynamics tells us that entropy (disorder) always tends to increase. In the era of AI-assisted programming, we are facing a brand new kind of entropy increase: the exponential proliferation of information fragments.

As AI becomes our "Junior Engineer," it produces massive amounts of code and conversation every minute. These fragments are scattered across different IDEs, different CLI tools, and hundreds of hidden directories in your local file system.

The scariest part isn't not finding the code, but not finding "why it was written that way."

For example, your current project uses axum instead of actix-web. This was definitely not a random choice. You vaguely remember that three months ago, you had a deep 2-hour debate with the AI. You discussed type safety of middleware, compile speeds, and even compared the latest community benchmarks.

That conversation contained incredibly valuable architectural decision logic.

But now, that conversation is gone. It dissolved like tears in rain into thousands of .json files on your hard drive.

We always assume that since GitHub hosts our code, we own everything. But code is just the result (The Output). In the AI era, "how you got to this result"—that is, your interaction process with AI, your prompt strategy, your debugging path—is the true "Craft".

And our current toolchain is ruthlessly discarding this craft.

Islands of Tools

Many geeks might say, "I have ripgrep, I have full-text search, what am I afraid of?"

But the reality is, traditional search tools face three unavoidable pitfalls when dealing with AI logs:

- Directory Isolation: Most CLI tools (like Claude Code) isolate sessions by the current working directory (CWD). The decisions you made in

~/project-asimply don't exist when you are in~/project-b. Your memory is physically severed by the file system. - The Pain of Mental Decoding:

grepcan indeed find keywords. But reading raw logs is like watching the code rain in The Matrix. Without rendering, without Markdown formatting, without syntax highlighting, you cannot scan it quickly. - Cognitive Barriers of Toolchains: The architecture you settled on in Cursor is invisible to your CLI tools. Your memory is forcibly sliced up by the toolchain.

We are like amnesiac craftsmen, reinventing the wheel every day, unable to remember how we built it yesterday.

A Viewer with an Omniscient Perspective

We need a new metaphor.

If the IDE is our "Workbench" and AI is our "Copilot," then we also need a "Black Box".

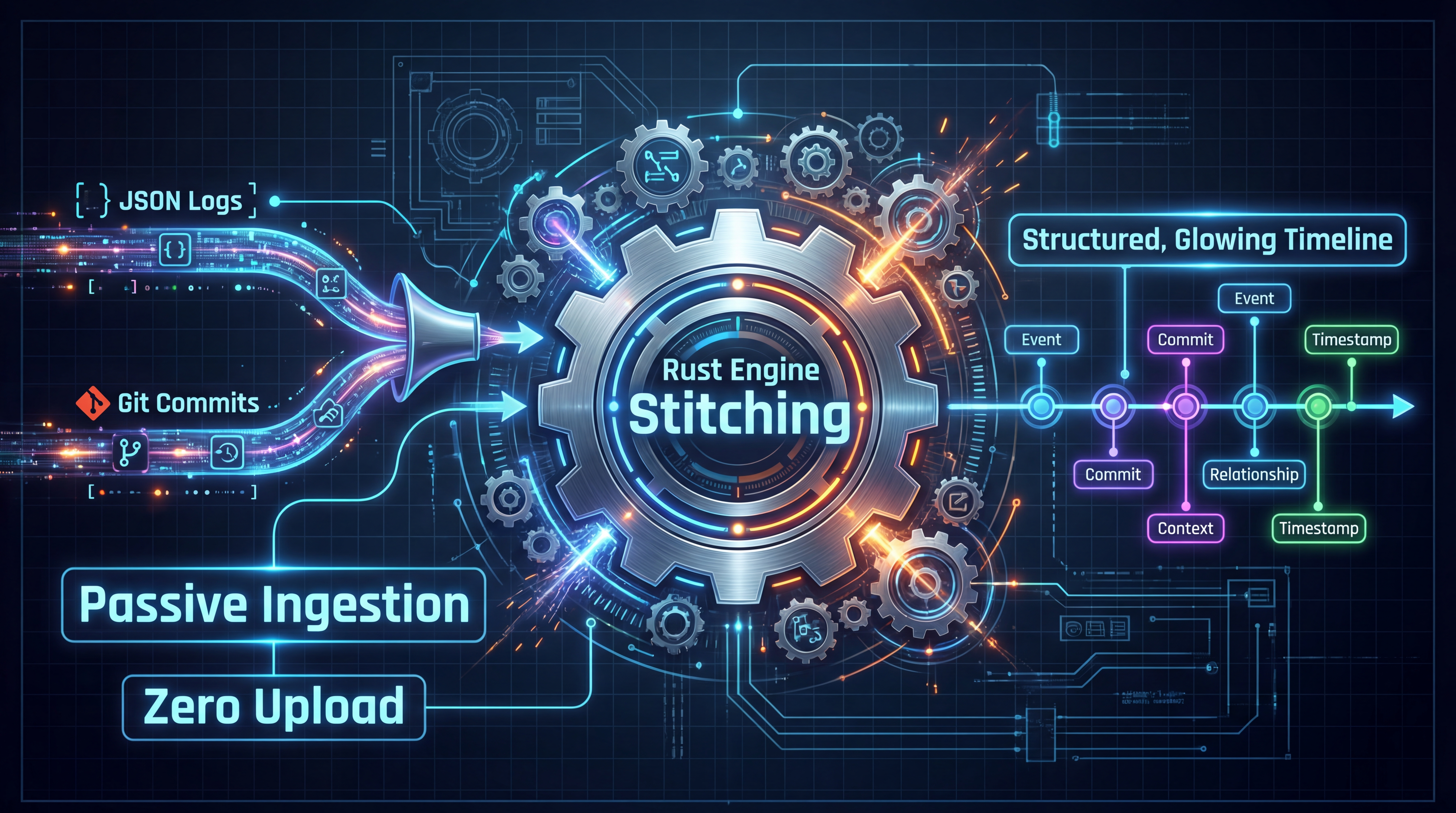

We need a tool that doesn't try to teach you how to code, doesn't try to interrupt your flow. It simply records everything, quietly and non-invasively.

It should be like a Viewer with X-ray vision. It can penetrate the isolation of file systems, penetrate the barriers of tools. It can stitch your scattered log fragments into a complete timeline with context.

It should be able to answer questions like:

- "Which project was I discussing Redis persistence strategies in last month?"

- "The code looks like X now, but what was the reason the AI suggested writing it as Y back then?"

This is the philosophy of Mantra.

We built it on three stubborn beliefs:

Local-First, Passive Ingestion.

Because we believe that every Prompt you write, every debate you have with AI, is your unique knowledge asset. They shouldn't vanish when the Session closes.

Code is the Result. Process is the Mantra (Craft).

Mantra is now available for trial

If you are tired of searching for memories in fragmented records, download and experience it.